AI models got 280 times cheaper in two years. They also got dramatically better. GPT-4 level reasoning now runs on open-source models you can host on a single machine. The technology has never been more accessible.

And yet, the project abandonment rate doubled. In 2024, 17% of companies walked away from most of their AI initiatives. In 2025, that number hit 42% (S&P Global). The average organization scrapped nearly half of its proofs of concept before they reached production.

Better technology. Worse outcomes. If AI were a technology problem, this trend would run in the opposite direction.

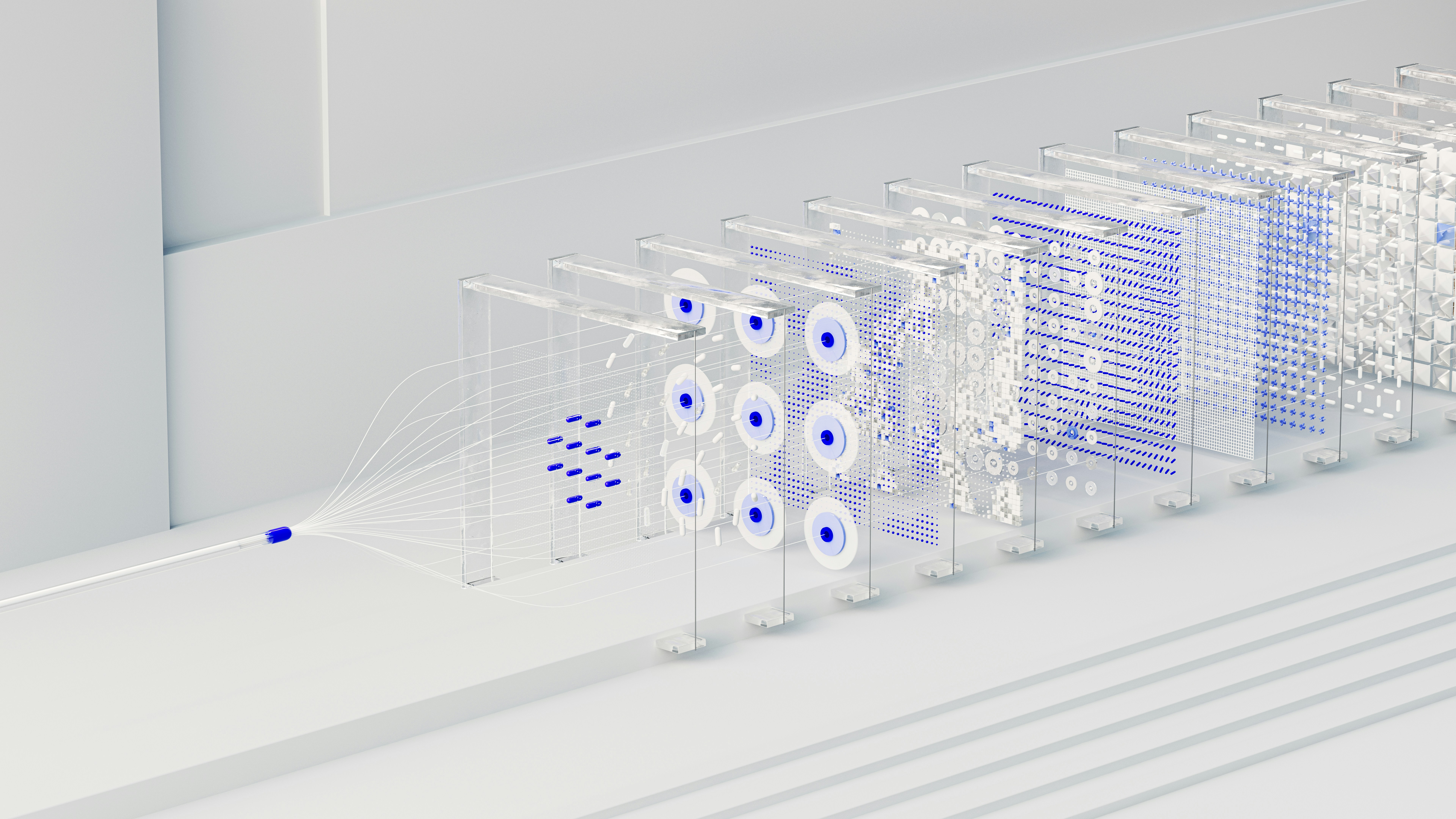

It doesn’t, because AI was never a technology problem. It’s a design problem. Most organizations don’t know how to design the workflows that make AI useful in their actual operations. They buy tools. They run pilots. They watch demos. Then nothing changes in how the company actually works.

The Workflow That Doesn’t Exist

Think about what happens when a potential client calls a law firm. The phone rings. Someone answers, or doesn’t. The caller explains their situation. Someone decides whether it’s a viable case. Information gets entered into a case management system. A conflict check runs. An attorney gets assigned. A retainer letter goes out. The client gets a follow-up call.

That’s nine steps. Each one involves judgment calls, exceptions, and handoffs between people and systems. Each one has a failure mode. The phone goes to voicemail because the receptionist is on another call. The intake form sits in someone’s email for three hours. The conflict check requires pulling records from two systems that don’t talk to each other.

AI can handle parts of this. An AI agent can answer the phone, qualify the caller, extract case details, and enter them into the system. But only if someone has designed exactly how that works. Which questions does the AI ask? What counts as a qualified lead? When does it hand off to a human? What happens when the caller speaks Spanish? What happens when the AI misclassifies a case type?

None of these are technology questions. They are design questions. In most organizations, nobody is doing this work.

Why Most AI Stays on the Surface

McKinsey tested 25 organizational attributes to find which one most strongly predicted financial returns from AI. The answer was workflow redesign. Not model selection. Not data quality. Not executive sponsorship. Redesigning how work actually gets done had the single largest effect on whether AI generated real business impact.

But only 21% of organizations using AI have fundamentally redesigned even some of their workflows.

The other 79% are running AI on top of existing processes. Chatbots that answer FAQ pages. Summarizers that condense meeting transcripts. Email drafters that save ten minutes a day. These are real capabilities. They are also surface-level. They don’t change how the company operates. They make individual tasks slightly faster without touching the underlying workflow.

The company can say it “uses AI.” It might even show productivity gains in a quarterly report. But the core operations run the same way they did before. No process has been redesigned. No workflow has been rethought.

Companies default to this pattern because the alternative is hard. Redesigning a workflow means understanding the workflow first. Most companies don’t have their own processes documented clearly enough to hand them to a new employee, let alone to an AI system. So they settle for the safe version. The chatbot. The summarizer. The things that can’t break anything important.

The 70% Nobody Budgets For

BCG studied what separates companies that generate real value from AI from those that don’t. They found a ratio they call 10-20-70. Ten percent of success comes from algorithms. Twenty percent from data and technology. Seventy percent from people, processes, and how work is reorganized around AI.

Most companies invert this. They spend on the 10%. They buy AI tools, sign up for API access, hire a machine learning engineer. Then they discover that the model works fine in isolation but doesn’t connect to how the company actually runs. The data lives in six different systems. The team doesn’t trust the output. Nobody agreed on what the workflow should look like in the first place.

The 70% has no budget line. No job title. No methodology. That’s exactly the part that determines whether AI creates value or becomes another abandoned pilot.

This is showing up in how companies spend. KPMG found that 87% of enterprises now embed managed services into their AI plans. Menlo Ventures reports that 76% of enterprise AI use cases are purchased rather than built internally, up from 53% the prior year. Companies are learning, through expensive failure, that the capability they’re missing isn’t technological. It’s the ability to design how AI fits into their operations.

Sequoia Capital published a piece in March 2026 called “Services: The New Software.” The core observation: for every dollar companies spend on software, they spend six on services. The argument is that selling AI tools means competing on model speed and price. Selling the work means the better the model gets, the cheaper and faster the service becomes. This framing only makes sense if you accept that the hard part was never the model. It was always the work around the model.

What Design Work Actually Looks Like

The word “design” is deliberate. This isn’t project management. It isn’t change management. It isn’t systems integration, though it includes elements of all three.

Designing an AI workflow means starting from the business outcome and working backward. Not “we want to use AI for customer support.” Instead: “When a customer contacts us with a billing dispute, what should happen, step by step, and which steps can AI handle reliably?”

That question forces specificity. It requires mapping every step, every decision point, every exception. It requires understanding the AI’s actual capabilities and limitations for each step. Not what the vendor demo showed. What the model does when the input is messy, ambiguous, or in a language it handles poorly.

It also requires making tradeoffs. AI might classify 90% of incoming requests correctly. What happens to the other 10%? If a misclassified request means a VIP client waits four hours for a response, the design needs to account for that. If it means a low-priority ticket gets slightly delayed, the tolerance is different. These are design decisions. The model can’t make them. The tool vendor doesn’t make them. Someone who understands both the business and the technology has to sit down and think it through.

This work is specific to each company. A 50-person law firm and a 50-person accounting firm might use the same AI tools. But their workflows, their edge cases, their failure modes, and their tolerance for error are completely different. The technology is general. The design is always specific.

No tool vendor does this for you. They sell the kitchen equipment and leave you to figure out how to run the restaurant.

The Question Worth Asking

The next time an AI project stalls, skip the model evaluation. Skip the vendor comparison. Skip the ROI spreadsheet.

Ask a simpler question: did anyone design the workflow?

Not the technology architecture. The workflow. Who does what, in what order, with what inputs, producing what outputs, with what fallbacks when something goes wrong.

If the answer is no, the project didn’t fail because of AI. It failed because the design work never happened.